№ 113 | Axbom’s Playing Cards, Creativity Tarot, The 2030 SDGs Game, Adding Confidence Levels to the BMC, Anchoring & Vigilance with AI, Yes and No, Energym, and The Alpha Generation

Wait, what?! The last issue of Thinking Things went out with ne’er a mention of card decks? How can this be. “The horror! The horror!” Let’s set things right again with this issue…

Axbom’s Playing Cards

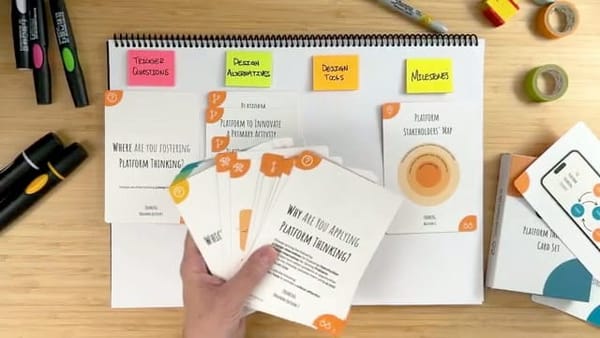

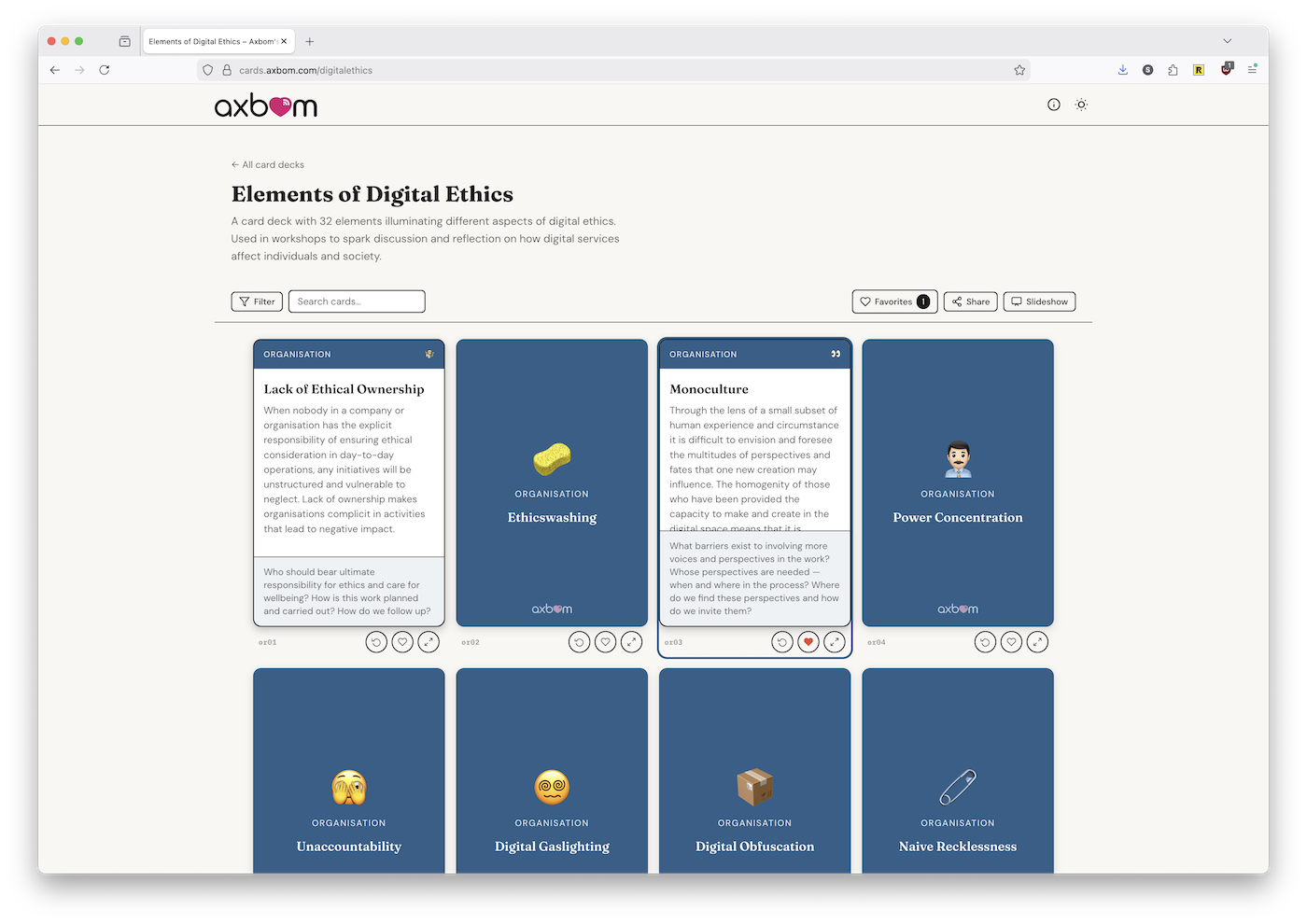

My friend Per Axbom has made 2 3 card decks “for critical thinking” freely available online. And it appears he built his own card platform to share these decks: Axbom's Playing Cards.

Today it's exactly five years ago since I first published The Elements of Digital Ethics. I've received so much positive feedback on that chart. People from around the world, tutors and practitioners alike, have shared with me how they make use of the tool in their work and teaching.

So, on this 5-year anniversary, I decided to make it even easier for anyone to start using the elements in everyday work. I've made them into interactive playing cards on a website – and it's free to use for all.

I found it easy to use, though Per recommends watching a quick video introducing the features of the website.

And if you’ve read anything from Per Axbom, you know the content is unquestionably good. You can visit previous issues where I’ve shared work from Per, including:

- Issue № 42 |The AI Hammer,

- Issue № 56 | Bias in Machine Learning, and

- Issue № 90 | Special Edition on Hexagon Card Decks where I challenged Per to create an Elements of Digital Ethics card deck! I guess he’s done that now, minus the hexagon shape.

What—more cards you say?!

Creativity Tarot

The Creativity Tarot card deck was mentioned at the February CardStock meetup. I don’t own a deck (yet), but from the previews on Etsy, the creative challenges seem pretty solid. Not sure about the tarot part.

Why stop at two card decks? Here’s a card-based game, also discovered via Cardstock.

The 2030 SDGs Game

File this one under: Not new (made in 2016), but new to me!

The 2030 SDGs GAME takes the 2030 UN Sustainable Development Goals, and turns them into a game. Why a game? Among other reasons, this

simplifies and makes accessible an extremely complex issue to a level that allows people to begin to understand, while stimulating our natural curiosity to learn more.

😍

While access to the game itself requires training, the site offers a detailed enough description to get a feel for the game aspects of this:

- personal goals, to represent “diverse people with different values”

- a shared “World Condition Meter” to monitor and track three areas: Environment, Economic, and Society

- “Time" and "Money" cards as in-game resources

- Project and Principle cards you pick up along the way

Personally, I’m really curious as to how this has been tuned. Is there a successful win condition, where every player “wins” or is the point to highlight the tensions and trade-offs necessary for a sustainable world? I’m reminded of the more recent (2022) simulation game Half-Earth Socialism which did a fantastic job promoting the really difficult decisions—and sacrifices—needed for a habitable world (see my comments in issue № 63).

Adding confidence levels to the BMC

I’m sharing this LinkedIn post for one simple reason: I’ve never thought about adding confidence levels to the business model canvas (or any canvas for that matter!). But why not? It’s an extra layer of information that—especially in the case of the business model canvas—seems useful & relevant.

Anchoring & vigilance with AI tools

I love it when someone offers a crisp articulation of something that’s been—for me at least—a jumbled, rambling explanation.

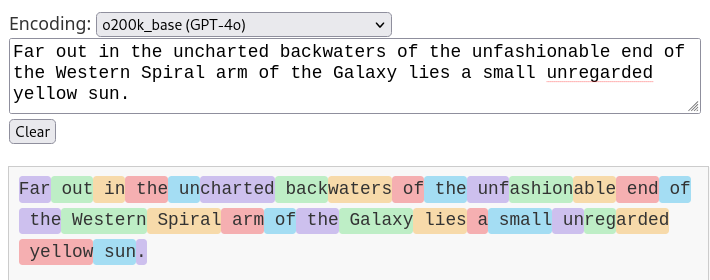

While there’s much more to “What is a token,” I want to focus on the succinct way that Jennifer Moore gives memorable labels to two of my human concerns about working with AI models: Anchoring and Vigilance. I wrote about the dangers of anchoring in my essay “9 Human Challenges with Using AI Co-Pilots”, where I wrote this:

As humans, once information is handed to us, we tend to think in the same conceptual direction. Whether that’s building upon, refining, rejecting, editing, whatever—we are reacting to that initial information. It’s a feature (or bug?) of our silly little brains.

Vigilance is the other term I’ve been searching for to describe—no, capture—all that we have to be on guard against when using AI tools:

Vigilance means… remaining alert for potential hazards. And it’s really, really hard. It’s why you feel exhausted after a day of driving. Humans are just bad at this…

The marketing and the default behavior of these chatbots is to skip straight to the end and have it produce─whole cloth─text resembling the higher order analysis. That's a problem. That result can't be trusted, but verifying the result is more work than doing the analysis in the first place. That's exhausting, and people can't sustain vigilant critical skepticism against what feels like a zip bomb targeting human attention. Even less so when the output is something they want.

I’m all for things we can think with, tools that augment and extend our limited thinking abilities. But when things offer to think for us, we should be very, very cautious. Red flags. Alarm bells. All of it.

The problem is this outsourcing of judgement is increasingly unavoidable. The line between using an AI as a tool to retrieve information and trusting it to organize, synthesize, and summarize information is often very thin, if it exists at all.

For similar musings, check out “What does a tool owe you?,” especially this part on “devotional practices”

When I say that creativity is a devotional practice, I don’t mean it in a religious sense. I mean that these are things we do that have intrinsic value — value in the doing, not just in the output. The act of writing an essay teaches you what you think. The act of designing something teaches you what you care about. The act of working through a problem teaches you how to think. These processes can’t be outsourced without losing what made them valuable. You can’t delegate your thinking to a tool and still expect to truly understand something. The transformation is in the process, not the outcome.

Technology that catalyzes these devotions makes them more valuable. Technology that replaces them makes them irrelevant, destroys the dignity that underpins doing truly amazing work. That’s the choice that’s being made in every AI tool being built right now, and almost nobody applying the technology is making it consciously as part of their design process.

Yes and no

Note to self: I need to spend some time with the Anti-Brief web site.

In the meanwhile… I kind of love these musings on the nuances of yes and no, from David Byrne’s 2006 book Arboretum:

The boundary between yes and no isn’t sharp. There are many shades in between: maybe, possibly, uncertain, doubtful but perhaps. And these positions are not fixed. They shift over time — what feels like a no can turn into a yes, and the other way around. The whole spectrum is in motion.

As for inclusion in the The Anti-Brief web site, designer/contributor Elio Salichs has this to say:

To me, “yes” and “no” can feel too rigid—almost arrogant—when presented as absolutes. It’s not that they don’t exist, but I feel we are often pushed to choose in between them too early.

This reminded me of a diagram I created a long, long time ago to clarify additional responses beyond a simple yes or no:

Energym (AI satire video)

By now, chances are good that you’ve seen this satirical video for a fictional company Energym. It’s been aptly described as “ a Black Mirror episode, in 45 seconds.” Anyway, if you haven’t seen it yet, check it out.

I think we’ve established that I love satire (see the previous issue of Thinking Things where I gushed about the movie Good Luck, Have Fun, Don’t Die!). That said, I also fully agree with this comment on LinkedIn:

Anyone tired of normalizing dystopian futures?

All this satire, after a time, ceases to warn us and starts to normalize dystopian futures. This is something I called out in my Euro IA 2020 keynote Hopeful and Powerless? Design in a Crisis when I spoke on Hope, and the need for more hopeful stories about the future.

So, in response, I want to share some positive, hopeful visions of the future!

First, an economic collapse 😬… that then leads to a global care crisis…? 🤔

“The 2028 Global Care Crisis” describes exactly this, in detailed economic terms. (Note: This was written in response to this much less hopeful piece “The 2028 Global Intelligence Crisis”). While one of these is more probable than the other (a change in societal values often takes decades), I found this next ‘find’ very hopeful. If anything, it makes something like the “Global Care Crisis” feel more plausible…

“The Alpha Generation – A World of Hope and Change”

I want to wrap up this edition of Thinking Things with a link to “The Alpha Generation – A World of Hope and Change” by Katerina De Pauw. These are observations made after attending a forum of 2,000 international school parents and teachers.

It’s a hopeful read. In it, she notes the following themes that might describe the Alpha Generation:

- A disconnect from tech-driven lifestyles; or more accurately a thoughtful embrace of technology that is “designed to serve humanity, not consume it.”

- Yearning for nature and things made by hand; a rejection of “materialism and shallow consumerism.”

- Empathy, awareness, and resilience - “Having witnessed global crises, they are not naive to the state of the world, but they are far from defeated.”

- The importance of relationship and family skills, especially “in a world where many societal norms around marriage, parenthood, and relationships are being redefined.”

- A rejection of capitalism and politics.

I especially enjoyed the section near the end, identifying things we should be teaching this generation, as they overlap with some of the topics I tend to explore here. Critical thinking. Sustainability. Mindfulness…

And where's the second, positive, hopeful visions of the future I promised? After reading these observations, Aksinya Staar shared this future scenario on LinkedIn:

Forget "Digital-First." Gen Alpha’s future is Human-First. They aren't heading to the Metaverse; they’re going back to the garden with a smart toolkit. Data-tracking for profit will be seen as a barbaric practice of the past. Tech will be like plumbing: powerful but hidden. A move from "owning" to "stewardship." Cities will become Urban Forests where the "nature/city" line blurs. The leaders of 2060 won't be aggressive CEOs, but skilled community builders...

Boom.

Random Fun Stuff

…with 100% more images!

When it’s not socially acceptable to roll the dice, there’s the Analog Dice Simulator:

- Digitized Signatures turn your name (or any series of characters) into a vector line shape, like this one:

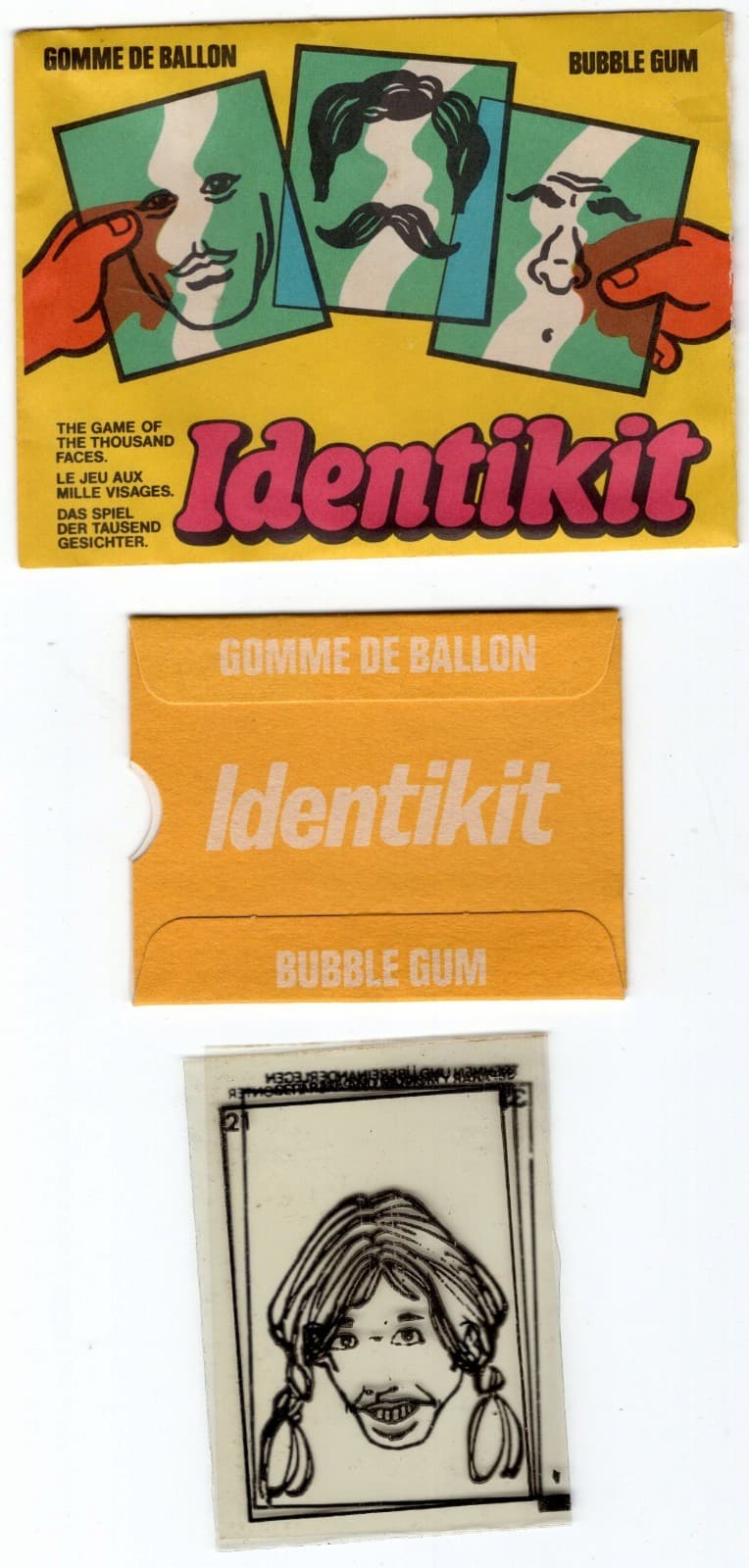

- Identikit—“the game of the thousand faces” uses (used, as this is long out of print!) transparent cards that are stacked in a mix-and-match fashion to create different faces.

- A conversational party game, Card Assassins challenges you to “assassinate your targets by getting them to say one of your Kill Words.” (Also, found via the CardStock meetup! Really, you should go to these if you like cards.)